AI had already been the talk of the town for a while, and ChatGPT simply broke the internet after its release. People couldn’t get enough of the astonishing power of the gpt-3.5-turbo model (from OpenAI) behind this genius tool.

But it didn’t stop there!

Recently, social media feeds across the globe were flooded with rumors surrounding GPT-4. The image and/or a copied snippet of text that was circulating goes like this —

Some were overwhelmed by the fast-paced AI development happening around them.

Others, on the other hand, called it a hoax following an interview with OpenAI CEO Sam Altman dismissing rumors surrounding the number of parameters.

But after days of speculation, the GPT 4 rumors turned out to be true!

(Well, partially to be specific…)

You see, the GPT-4 OpenAI model was released on 14th March alright.

But has the world ‘changed’ since then?

Let’s find out!

(But first )

Introduction to GPT Language Model

For those who still don’t know:

GPT stands for “Generative Pre-trained Transformer.” It is an LLM (Large Language Model) used for natural language processing (NLP) tasks such as language translation, text summarization, and question answering.

GPT, in short, is a Generative AI model. It was developed by OpenAI, a leading organization in the field of artificial intelligence.

✅ The most popular example of GPT would be ChatGPT, which took the whole internet by storm in November-December of 2022.

Initially, ChatGPT ran on gpt-3.5-turbo, an iteration of the GPT model.

(For those still unaware of ChatGPT) ⤵️

What is GPT-4?

So, GPT4 is just the latest version of OpenAI’s GPT model, which comes after the much-hyped GPT3 (duh!)

It has been made available to the plus users of ChatGPT and the API is also underway via a waitlist.

So, what does this GPT 4 model come with?

Did this release live up to the prior hype?

Let’s delve right into that!

👉 I’ve compiled a list of the Best GPT-4 Tools out there, you can check it out if you’re interested!

What’s New in GPT-4?

To understand the “expectation vs. reality” scenario of this recently released GPT model, we must learn about the new additions in this version.

So, let’s go through them one by one:

Visual Input: The First Step Towards Multi-Modality

Yes, it’s finally here!

Multimodality!

GPT-4 (and also ChatGPT, if you enable this model) can analyze and process images now, as opposed to the previous versions having the capability of processing only text.

⚠️ But here are the catches:

- It’s currently exclusive to ChatGPT plus. The free users can’t access it as of now.

- You can’t “upload” any image to the chat interface (it might change with the developer API). Rather, you’ll have to upload it elsewhere and provide ChatGPT with the link.

Here’s a quick demonstration of how image inputs work now ⤵️

As you can see from the video, the possibilities are simply endless, considering the cross-functional applications between text and image!

So far, it can —

✅ Detect multiple objects in an image

✅ Find reasoning and logical expressions relevant to an image.

For example

✅ It can even process handwritten text from an image (as seen in the GPT-4 demo video from OpenAI)

But people have reportedly been facing challenges and issues while following this process, as ChatGPT often gives the generic error message that it still can’t analyze images.

(bipolar disorder of AI? )

Nevertheless, we should remember that it’s been JUST released so it’s natural that issues and bugs will be found. I hope, with enough time, these problems will be resolved to give a smoother user experience.

Also, remember — this is merely the beginning of this journey of multimodal AI! With the inclusion of audio and video in near future (fingers crossed ✌️), GPT will surely become an integral part of our lives.

Longer (and Better) Input Processing

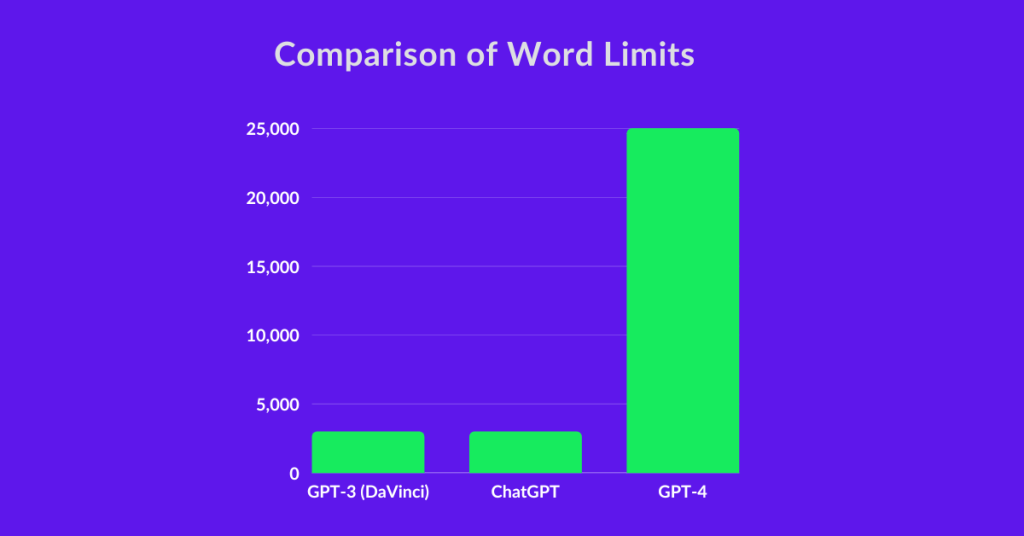

GPT4 allows you to input up to 25,000 words in a conversation, which was only 3,000 previously!

That means better contextualization and more accurate responses from the model. So, users like you and I can literally feed —

- Books

- Scientific papers

- Knowledgebase

- Documentations

- Legal papers

… and many more and GPT-4 won’t break a sweat to give the desired output!

Not just that — think of the previous version of ChatGPT and GPT APIs with short-term memory. Now, GPT-4 has 8x more memory than its predecessor so you can tweak and try your prompts all you want!

The context processing is simply like nothing seen before!

3 Different Modes of ChatGPT

Bad news for the free users of ChatGPT — the GPT-4 model is exclusive for the pro users (as of yet).

For the plus users, however, 3 different models are now available:

- Default (turbo)

- Legacy

- GPT-4

Here’s a comparison among these Chat GPT models

As can be seen from the image,

✅ The default turbo model focuses solely on speed. So, for faster response, ChatGPT plus users can stick to that.

✅ The legacy model also uses GPT 3.5 like the default one, but comparatively more suitable for logical reasoning.

✅ Lastly, the GPT4 model is the superior choice for both reasoning and conciseness. But, it’s not as fast as turbo.

Intrigued?

Give it a try from here -> ChatGPT

GPT 3 vs GPT 4: Head-to-Head Comparison

It’s time to pit them head to head! ⚔️

Let’s compare GPT 3 with its descendant GPT 4 and see which one comes out at the top!

Who Aces More Tests?

Yes, you read that right.

AI can sit for exams and actually pass them with flying colors!

But which model is better at passing exams?

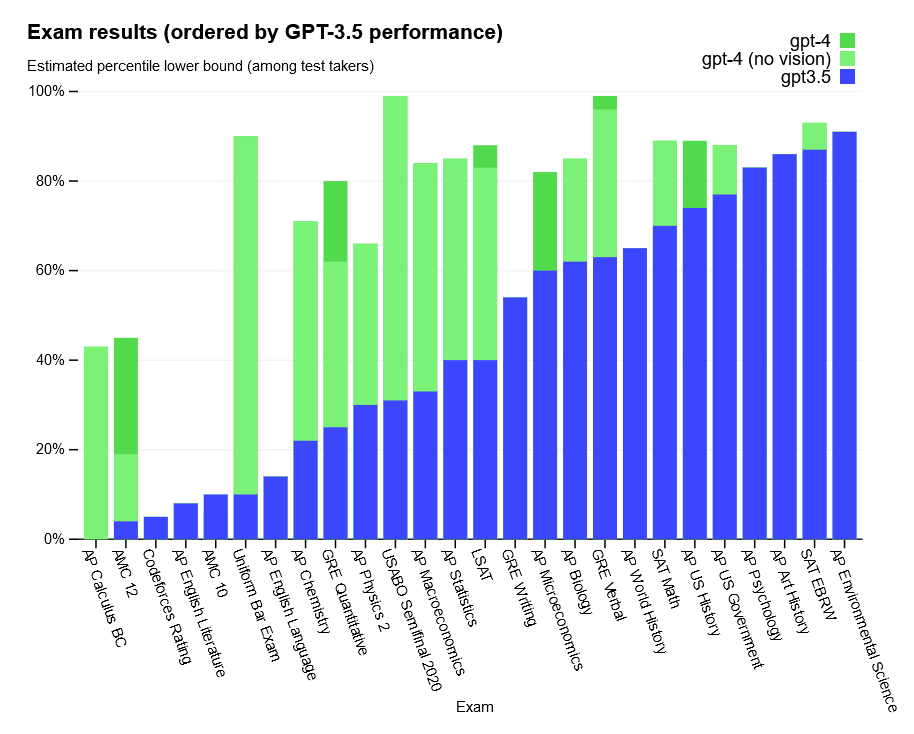

OpenAI has openly (pun intended ) disclosed this information with the below chart:

You can see that several standardized and renowned tests are present on the list, including —

- SAT

- GRE

- Uniform bar exam

- Advanced Placement exams

… among others.

As stated by OpenAI itself, it’s hard to find the subtleties between the outputs generated by GPT-3 and GPT-4 (at least for us puny humans ).

But these standard test results clearly depict how superior GPT-4 is, even when compared to the GPT-3.5 model

GPT-4 is the clear winner in acing tests!

Who Processes More Tokens?

As stated above, GPT-4 can process more words. But how?

Well, the numbers given above are estimations. It’s not like GPT-4 will suddenly cut off its work if the word limit exceeds the mark of 25,000.

You see, the GPT model measures its inputs and outputs in a unit called “tokens”. This tokenization is actually quite complex and that’s why it’s nearly impossible to be 100% accurate with the word count estimation.

However, 1 token roughly translates to 4 characters, according to historical performance.

So, the previous token limit of GPT (4,000 tokens) is approximately equal to 3,000 words.

Similarly, the current token limit of GPT-4 is 32,000 tokens — converting into words will get you around 25,000 words. ✌️

Here’s a simple comparative chart ⤵️

As we can see from the above image, GPT4 is 8 times more capable than its predecessor when it comes to input size.

GPT-4 wins the race in processing input!

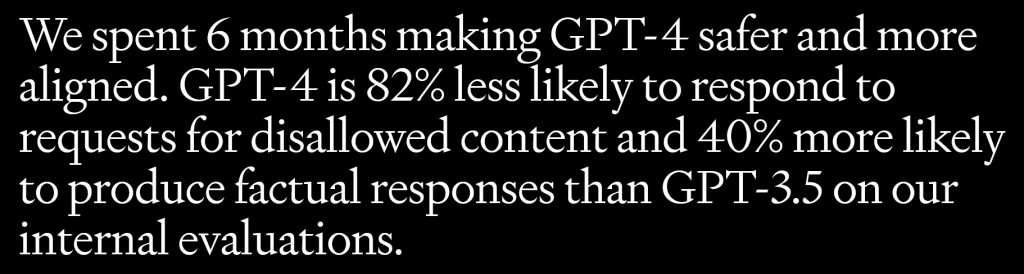

Who is Safer and More Factual?

I’ll just add what OpenAI has to say on this matter

GPT-4 is safer and more factual than the previous GPT versions!

Who is Cheaper?

For a comparative look at the API pricing of GPT3 and GPT4, I’m making a simple table below:

(note: here, davinci-003 represents the traditional GPT3 model while GPT3.5 Turbo is the engine behind ChatGPT)

GPT 3 vs GPT 4 Pricing

| Tokens | Words (approx.) | GPT-4 (8k) | GPT-4 (32k) | GPT-3.5 Turbo | davinci-003 |

|---|---|---|---|---|---|

| 1,000 | 750 | $0.09 | $0.18 | $0.002 | $0.02 |

| 10,000 | 7,500 | $0.9 | $1.8 | $0.02 | $0.2 |

So, $0.06 + $0.03 = $0.09

So, it’s evident that GPT4 is significantly more expensive than both the GPT3 models in comparison.

To be specific, GPT4 (the default 8k version) is —

- 45 times more expensive than ChatGPT, and

- 4.5 times more expensive than the davinci-003 GPT3 model

Then again, considering the superiority in multimodality, reasoning, and overall performance, the price increment seems obvious.

GPT-4 is more expensive than the previous GPT versions!

So, Did the World Change or What?!

The short answer?

No, it certainly didn’t

As for the GPT 4 rumors that it will be 500 times more “powerful” than GPT 3.5 with 100 trillion parameters is simply an internet hoax (as clarified by Sam Altman even before the release).

But, but, but…

It has the POTENTIAL to change the world if we’re up for it!

Whenever the world changed throughout history, the traction didn’t come from the tools or technology. It’s us, humans, that made them happen — fueled by our imagination and creativity!

Let’s be creative and change the world for the better using the immense power of AI ✌️

Frequently Asked Questions

Does GPT4 come with a separate API?

Yes, it does!

To access the GPT-4 API and build your GPT-4 powered applications and solutions, you can join this waitlist.

Can GPT-4 generate images?

While the latest GPT version has finally come up with multimodality, it’s still limited to taking image inputs and analyzing them (as of now). GPT4 is still unable to generate images on its own.

For generating images using AI, OpenAI has a separate product called DALL.E 2

How much does GPT-4 cost?

GPT-4 has 2 different models (the default 8k version and the advanced 32k version) with their own pricing. The difference between the versions is how long the context lengths are in terms of user prompts.

Go to this section for a detailed pricing comparison of GPT4 with GPT3.

Conclusion

And that’s about it!

Please note that this is just the early news and information on this latest AI model and lots is still left to uncover.

Comment below your findings as well as my shortcomings. I’m all ears!

Click here and read my hot takes on marketing, branding, psychology, copywriting, WordPress, personal finance, contemporary society, cinema, and everything in between!